Insights from The Lab Consulting

Business Standardization 101

Introduction to Standardization in Business

Standardization can confer numerous competitive benefits to businesses, from the efficiencies gained in the manufacturing production line, to the massive, untapped productivity gains available to knowledge workers, to the implementation of cutting-edge performance-enhancing technologies such as robotic process and intelligent automation (RPA), business intelligence and advanced analytics, and process mapping for customer experience (CX) enhancement.

Despite all that potential, it can be difficult to find a consistent and usable definition for standardization in business—let alone the actual standards that result from it.

In this article, we will definitively answer the question, “What is standardization?” We will trace its history and development. We will define and explore the different types of standards. We will show the numerous benefits and advantages of standardization. And we will outline the most effective strategies for tapping into the single, most competitively valuable source of standardization in business: innovation.

But first take a look at this short video that reviews the history of business standardization and reviews further shy knowledge work standardization is the prerequisite for AI and automation:

What is standardization?

Standardization is any process used to develop and implement metrics (i.e., “standards”) that specify essential characteristics of something whose control and uniformity are desired. Thus, standardization can apply to just about anything, including:

- Rules/Laws

- Technologies

- Products

- Services

- Behaviors

- Measurements

Limits (and upsides) of the standardization definition

While the above, summary-level definition is accurate, it lacks sufficient detail to explain and exploit the transformative role of standardization in business—both in its historic past and its future potential. Capitalizing on standardization in business requires further definition of standardization processes, as well as the standards that they produce.

Finding this sharper focus is inherently difficult, given the following “Standardization Paradox”:

The basic irony of standards is the simple fact that there is no standard way to create a standard, nor is there even a standard definition of “standard.” [1]

So while standardization processes, and the standards themselves, are often poorly (even elliptically) defined, finding this sharper focus is possible, even straightforward. It begins by recognizing, even embracing, the above Paradox. That directs perception of new opportunities toward unconventional, under-standardized areas. These offer opportunities to develop newer, more rigorous processes that extend the conventional footprint of standardization and reap the resulting, time-tested benefits. These are the essential prerequisites for harnessing new, business-empowering technologies such as robotics process automation (RPA), business intelligence (BI), advanced analytics, machine learning, and artificial intelligence (AI). None of these technologies can live up to their promise of augmenting, not to mention replacing human activities, until these activities are standardized. And currently, humans are best positioned to perceive and perform this standardization.

Deliberate vs. de facto standardization with examples

Some standardization processes are launched intentionally and managed formally. Others develop casually and evolve relatively unmanaged:

Deliberate standardization processes are knowingly and intentionally defined, adopted, and enforced. They’re often centrally managed. They typically include documentation, formal reviews, and analysis, all to deliver a set of published standards; indeed, deliberate standardization processes are what most people associate with the term “standardization.” Examples include:

- Product standards that deliver consistent-tasting meals across a franchise restaurant network.

- Technical standards that enable connectivity across a global telecommunications network.

More examples, and details, will be provided in the Types of standards section below.

De facto standardization processes, by contrast, often emerge spontaneously, and proceed in a decentralized fashion. Despite broad input and wide adoption, they’re rarely managed. Instead, they’re typically informal, undocumented, and context-specific or interpretation-dependent. Thus de facto standardization processes can hide in plain sight; they’re easily overlooked and taken for granted. De facto standardization processes are what result from “crowd-sourcing,” although most people don’t give these a second thought. Examples of de facto standardization processes include:

- Everyday rules of thumb.

- Know-how.

- Tribal knowledge.

- Data contained within a business letter, email, or even an invoice.

- Trends in language variation, e .g., slang or idioms.

- Mobile phone emoticons, e. g., text or images.

What the above examples have in common, aside from their spontaneous roots, is the fact that human, rather than machine, interpretation of standards is often essential for even trivial tasks that rely on de facto standardization processes. For example, interpreting, selecting and extracting data from a loosely structured document such as an email. De facto standardization characteristics in operations makes it harder to automate these – mechanically or digitally.

Challenges of de facto standardization in business

While deliberate and de facto standardization processes can exist simultaneously, the distinction between them is simple and stark:

Deliberate standardization processes are subject to greater scrutiny, analysis, and evaluation. Therefore, the standards which emerge from these processes are typically well-tested and explicitly documented. It’s easy to locate their documented outputs, such as:

- Specifications

- Rule books

- Ingredient lists

- Building codes

- Safety regulations

- And countless others

Similarly, locating the organizations that authored these standards is a straightforward exercise.

Examples:

- CEN – The European Committee for Standardization (CEN, French: Comité Européen de Normalisation)

- W3C – The World Wide Web Consortium

- SAE International – initially established as the Society of Automotive Engineers

- ISO – The International Organization for Standardization

By contrast, de facto standardization processes—and the standards that emerge from them—can often be illogical, ineffective, and even counterproductive. When they’re longstanding, widely-held, and unquestioned, they present a significant barrier to the introduction of deliberate standardization—forming the basis of an “anti-standardization bias” that is difficult to recognize and overcome.

Examples of deliberate standardization

Deliberate standardization processes range from the casual and ad hoc to the formal and legal. Depending on the parties developing the standards, as well as their objectives, four broad categories of intentionally-developed standards emerge:

- Laws. Mandated standardization process, with the objective of enforcement.

- Voluntary development. Collaborative standardization process, with the objectives of coordination and interoperability.

- Preferences. Informal, ad hoc, even unconscious standardization process, with objectives varying from market dominance to least effort/most convenience.

- Innovations. Conscious, directed standardization processes, with the objective of achieving a strategic advantage, whether it is technical, economic, business-related, etc.

All of these categories—including the especially important fourth one, “Innovations”—will be discussed in greater detail in the Types of standards section later in this article.

Examples of de facto standardization

Among the less-obvious de facto standardization processes which persist in business today, five categories can be defined:

- Perception of facts. The earliest “modern” notions of facts are about 200 years old. These include standard definitions, normalized comparisons, and statistical analytics.

- Data visualization. Common techniques for data visualization, which pervade today’s PowerPoint presentations, originated in the latter half of the 1800s. As recently as the 1920s, these were still considered new; progressive advocates decried the infrequent use of leading edge tools such as pie charts and line graphs.

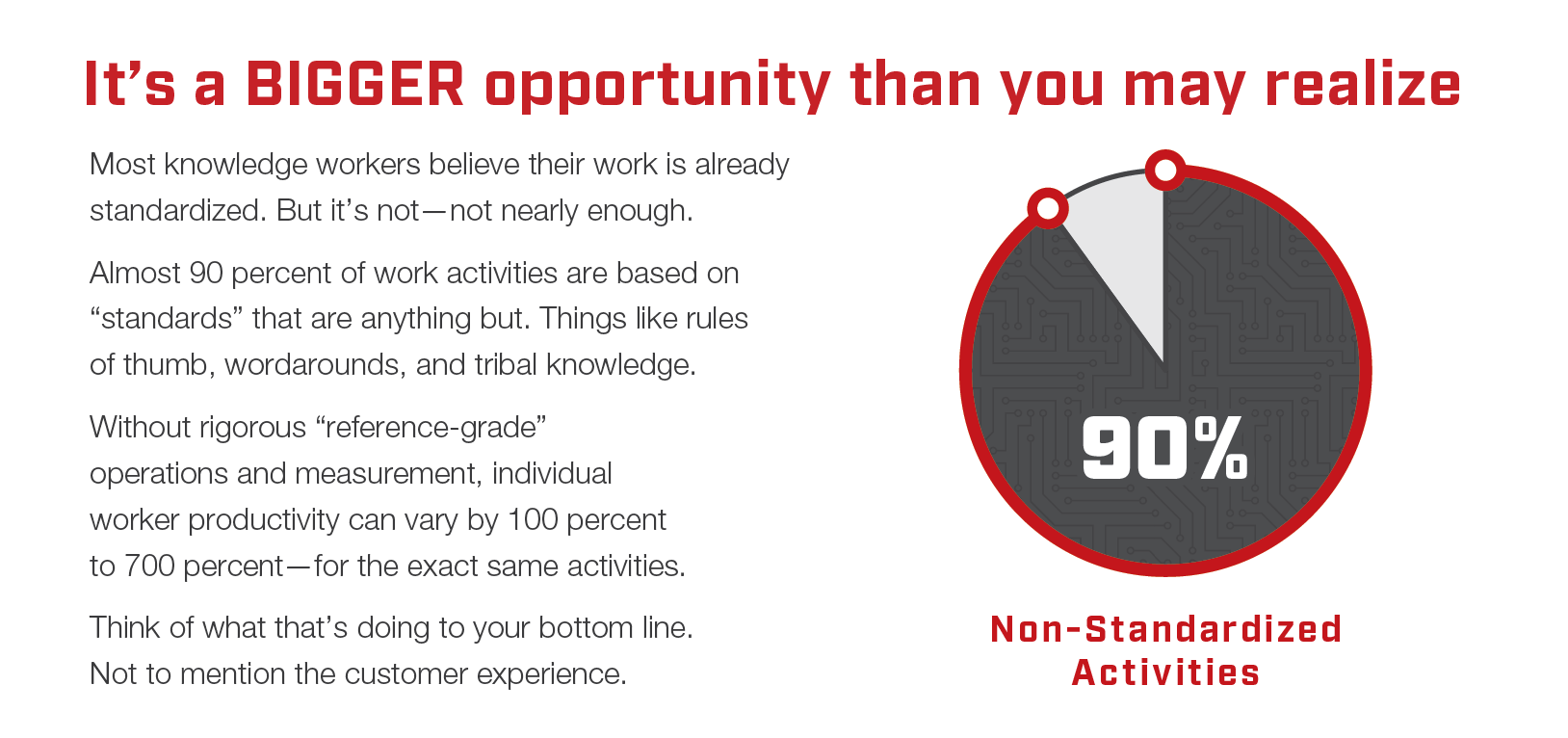

- Rules of thumb. This under-noticed category of business standards—which is not intended to be scientifically accurate—develops informally, based on experience and common-sense judgement. Most are perceived to be so obvious that no one bothers to write them down. They exist across organizations as a form of collective knowledge and an unintended, overlooked source of costly variance.

- Tribal knowledge refers to any unwritten information that is not commonly known by others within a company—yet may need to be known by others. Unlike skill, tribal knowledge can be converted into a company asset through standardized documentation.

- Workarounds. These are informal detours around known problems or limitations. Workarounds emerge as immediate solutions to the problems that result from the imprecision of de facto standards, creating a vicious cycle of inefficiency. Common examples:

- Extra field: if an electronic business form, or a database does not have a place or field to add a note or classification, workers hijack an existing field and use it for their alternative purpose.

- Disablement: workers often figure out how to disable features in technology to let them take short cuts or avoid detection (e.g., so they can use external websites, or pirated data sources).

- Software: technology developers often leave a “back door” in their applications to act as a shortcut, or let them evade detection.

- Legal: if workers are classified as “temps” or “contractors” employers may be able to evade payroll taxes, overtime rules, etc.; this may even let them evade their company’s rules, for example, a “hiring freeze” that prohibits adding new employees to the payroll.

History of standardization

The history of standardization, just like the practice of standardization, is far from written. It is still evolving. The conventional history of standards in business is rife with gaps: It begins in ancient times with the evolution of weights and measures—and then leaps forward thousands of years to the industrial revolution that began in the late 1700s. It typically ends with a dry review of the regulatory bodies (such as Underwriters Laboratories) that emerged in the early 1900s to set standards for power, communications, and a host of then-emerging technologies. But that’s not a history of standardization; it’s largely a history of technology, with an institutional history of standards organizations bolted onto it. The gaps in the conventional history of standardization create “blind spots,” or oversights, that represent valuable opportunities to improve business performance – especially in office, or knowledge work, operations.

- Standardization processes originate from the four fundamental sources noted earlier and detailed below (i.e., laws, voluntary development, preferences, and innovations).

- Competitive value from standardization in business derives primarily from just one of those sources: Innovation.

- Innovation strategies for standardization have evolved historically across four, increasingly-sophisticated categories (detailed below, after standardization sources).

- The results of successful innovation— the standards—often evolve through sequential historical “lifecycles.” We will explain these after reviewing the four innovation strategies.

The four sources of standardization

Source 1: Laws. In de jure (“by right”) development, standards are mandated by legislation, rulings, regulations, and interpretations thereof to meet the needs of courts and enforcement bodies charged with overseeing contracts, agreements, and compliance. Examples include:

- Tax laws

- Environmental regulations

- Building codes

This source of standardization in business has persisted throughout history. Seven thousand years ago, in ancient Babylon, clay tablets were employed to track merchants’ accounting figures. These were used for contracts, debt obligations and even demands for war reparations.[1] And in the United States in 1917, for example, there were 994,840 varieties of axes available for sale. Under Herbert Hoover’s newly-created National Bureau of Standards, a far smaller number was mandated—along with such mundane yet important items as nuts, bolts, and the wrenches to turn them.[2]

[1] BBC.com, “How the World’s First Accountants Counted on Cuneiform,” Tim Harford, June 2017.

[2] Heitman, William, The Knowledge Work Factory, New York: McGraw-Hill, 2019, pp. 25, 56.

Source 2: Voluntary development. Here, standards are created intentionally and implemented via collaboration and cooperation. Examples include:

- The North Atlantic Treaty Organization or NATO. The NATO Standardization Office (NSO) creates military standards and specifications common to all treaty members and followed by relevant manufacturers and services providers.

- Telecom interoperability standards, which include network operating standards, and can extend all the way to consumer product interfaces for everything from .

Again, the influence of these sources can be traced throughout history. Ancient Egyptians standardized the work on the pyramids by dividing it among skilled craftsmen, artisans, stonecutters, and porters—precursors of later trade guilds. Modern examples include crowd-sourced computer code, such as Linux and PHP; crowdsourced content such as Google Translate and Wikipedia.

Source 3: Preferences. Contrasted to the de jure (“by right”) development described above, preference-driven standards are more de facto (“in effect”). They emerge as a result of widely-preferred work methods, technology, and product and customer characteristics. They may be catalyzed by a diversity of factors, whether planned or unintended, such as convenience, market forces, tradition, and perception. Examples include:

- Computer operating systems, including Apple iOS, Microsoft DOS, and Windows.

- The moving assembly line.

- Data visualization, including the organization chart, and pie and bar charts.

- Facts (As we know them in the standards realm), including definitions, normalized comparisons, and statistical significance—these largely emerged in the late 19th century in Britain.

Preferences, as the basis of standards, can be traced back for generations. The Society of Automotive Engineers, founded in 1905, was instrumental in standardizing everything from the size of wrenches to the viscosity of motor oil; [1] while relatively recent, preference-based standards for computers include Linux and DOS, and for phones, systems such as iOS and Android.

[1] “What Does SAE Stand for in Motor Oil?”

Source 4: Innovation. This is the most important category for generating business transformation value. Individuals and businesses alike perceive standardization as an opportunity to achieve a competitive advantage, and continually seek out new ways to apply its fundamental principles that enable both specialization and division of labor, among workers and machines. Examples are numerous.

The four innovation strategies for standardization

Strategy 1: Division of Organizational-level Labor (DOL). Divides work performance at the organization, or group, level – the most obvious category of work activity – and specializes it among these groups. In business today, DOL is often based on markets, products, industries, companies, functional disciplines and more. Management objectives converge at these summary levels. In other words, both the work manager, or “divider,” and the work performer, or “dividee,” seek the benefits of specialization and standardization from this division of organizational labor.

DOL Example: Think of how a building is erected. The overall work is divided into categories such as design, financing and construction. Architectural firms compete for the design work. Lenders specialize in “financing.” Within the “construction” category, a General Contractor acts as a professional “divider” of the construction work, awarding contracts to specialists in excavation, plumbing, structural steel and more.

Self-Perception, The Standardization Blind Spot: Viewed over the course of time, standardization strategies reveal the evolution of organizations’ ability to re-imagine and re-invent their own work methods – their self-perception. DOL ranks as the earliest emergence of this timeless, challenging but valuable task: the hunter-gatherers of pre-history divided and specialized their work by group. Ancient Egyptians developed complex, hierarchical divisions of work that rival modern day organization charts. They also produced straight pins. So why did forty centuries elapse before anyone re-imagined and re-invented standardization for this humble product? The makings of the Industrial Revolution were “hiding in plain sight” – the blind spot of organizational self-perception – in the de facto production standards used for straight pin manufacturing from ancient Egypt to the shop floors of an 18th Century European pin factories (details below).

Strategy 2: Division of Activity-level Labor (DAL). Divides, and “unbundles” work activities – the most granular, least-obvious labor tasks – conventionally performed by a single, generalist worker and reallocates these among multiple, specialist workers. Specialization is achieved by regrouping activities and re-directing each worker to perform fewer, more-standardized activities – increasing their proficiency and productivity. This re-perception of labor transforms a communal workroom into an integrated factory. Ideally, both “divider” and “dividee,” i.e., employer and “specialized” worker, share the economic benefit.

DAL Example: Think of an early manufacturing plant with a manual production line. In 1776, Adam Smith documented how the straight pin manufacturers of his day profited from DAL. Traditionally, their workers had each produced pins individually, start-to-finish in a single location. This represents the “workroom” model of factory production. But, by dividing and unbundling each workers’ activity-level labor, the work could be reallocated unconventionally. Each worker could specialize on a narrow portion of the start-to-finish process – perhaps even performing only a single task. Individual workers essentially became a single, highly-specialized mini-factories within the scope of the same workroom. Importantly, each worker still retained the authority to manage precisely how they performed their assigned, specialized activities (more about this below). Average daily output-per-worker grew exponentially, from 20 to 4,800 pins. Smith also noted how this strategy (i.e., DAL standardization) would enable increased levels of automation. The simpler, standardized activities were move feasible for processing by machinery – designed for “machinability” in modern manufacturing vocabulary.

Infrastructure, Incentives, Chicken and Egg: Why didn’t the ancient Egyptians, who divided labor at the organizational level, also perceive the similar, activity-level, 1776 “pin factory” opportunity? Why didn’t they keep dividing labor? After all, they were the standardization experts of their day. Perhaps there was no competitive incentive to optimize the productivity of abundant slave labor. There was certainly no infrastructure (e.g., clocks, hours) to precisely measure productivity. But by 1776, infrastructure improved, and competition for manufacturing labor intensified. The challenges of organizational self-perception were suddenly overcome. Businesses began to re-imagine and re-invent their activity-level work methods and innovative new standardization strategies appeared. Did the “invention” of standardization spark the Industrial Revolution – like the textbooks say? Or did the competition for scarce labor in England simply reawaken the universal, timeless technique of productivity improvement, dating from pre-history – standardization?

Modern Day Workrooms: Today, DAL remains one of the most valuable, under-perceived and under-utilized standardization strategies for innovation. Surprisingly, the workroom methods of the 18th century still thrive, “hiding in plain sight” in office, or knowledge work organizations like Finance and Accounting, Marketing, Order Management, Engineering and across entire industries of knowledge workers: financial services, health care, and many others. And the past century of office automation – mechanical and digital – has failed to make a noticeable improvement in these workers’ productivity. The problem has come to be known as the Productivity Paradox.[1]

Strategy 3: Division of Activity-level Management (DAM). Separates the management duties for optimizing activity-level work tasks from the laborers who perform them. These activity-level optimization duties – observation, analysis, valuation, prioritization, methods planning, automating, etc. – are then standardized and specialized. DAM identifies, documents and implements the “best practices” for optimizing activity-level labor. Think of the standardized activities of an industrial engineering organization. In other words, DAM is the strategy of dividing, standardizing and specializing the work activities that comprise a standardization process. In other words, it is the elliptical, perceptually challenging strategy of “standardizing standardization.” DAM is arguably history’s most economically valuable standardization strategy, accounting for the vast accumulation of wealth from several waves of industrial revolutions over the past two centuries. Perhaps that’s because – at least in the factory – DAM overcomes the contradictions and perceptual challenges posed by the poor definition of standardization processes and the inconsistent standards that result (outlined at the start of this article):

The basic irony of standards is the simple fact that there is no standard way to create a standard, nor is there even a standard definition of “standard.” [2]

This statement is simply not true on the factory floor today.

DAM Example: Think of the earliest moving assembly lines for complex products such as bicycles, appliances and autos. To realize their promise of productivity, these required activity-level management capabilities far beyond those of individual supervisors and workers. A vast, accessible body of manufacturing knowledge was needed – scientific, technical and practical (know how). Developing and managing this body of knowledge was indistinguishable from developing and managing these new, high speed, standardized production lines. In order to standardize the manual assembly labor and synchronize the plant operational flow, manufacturers had to similarly standardize and synchronize the related body of knowledge. These physical factories, that processed tangible, standardized products, were supported by parallel knowledge work “factories” that processed the intangible standardized products of knowledge workers – bills of material, instructions, specifications, and best practices. Competitive survival was at stake. Out of necessity, manufacturers developed in-house standardization departments, staffed with newly-designated industrial engineers who were charged with developing this body of knowledge. Not surprisingly, they “standardized” these white collar, or Knowledge Work (KW) standardization processes. They divided standardization management activities into specialized organizations (“KW factories”) – equipment design, materials engineering, work methods research, labor standards and more. Increased competition drove initial creation of knowledge work “factories.” Later, increased regulation drove creation of new KW factories for safety, environmental controls, consumer protection and more. The external standardization efforts of manufacturers created industry-wide, cooperative KW factories such as the Society of Automotive Engineers (now SAE International).[3] And yet, this valuable standardization of knowledge work stopped at the edge of the factory floor. It did not naturally migrate into the white collar segments of the same businesses.

Perceiving New KW “Factories”: The idea of the “standardized knowledge work factory” for management was most famously articulated by Frederick Winslow Taylor as a part of his legendary 1911 treatise, Principles of Scientific Management. Although it remains almost exclusively perceived as Taylor presented it, i.e., relevant to manual labor in conventional, tangible factories, its application can be vastly extended to all areas of office and knowledge work. As we’ll see in the next section, the concept was successfully pioneered in knowledge work shortly after Smith published his 1776 treatise – well over a century before the miracle of the moving assembly line and the rise of mass production. Since then, many others have also proven its feasibility. Today, the competitive incentives and technical infrastructure for knowledge work standardization have long been in place. What’s missing? The capacity to perceive knowledge work standardization as a valuable innovation strategy has been lagging for a century.

Strategy 4: Division of Perception Management (DPM) – Separates the management activities for critically-strategic business perceptions from managers and knowledge workers. These management activities, e.g., identifying waste, defining value and prioritizing customer needs, are specialized in an “industrial engineering-style” department. Its goal is to capture the business value lost to predictable perception deficiencies: cognitive biases, logical fallacies; as well as under-documented, inefficient “tribal knowledge” management methods, rules of thumb and others. DPM standardizes “best perceptions” for optimizing both knowledge work design and performance – division of labor, specialization, and automation. It resembles the DAM approach for standardizing the best practices for factory labor.

DPM Example: From 1909 until the late 1960s, Detroit’s automakers were the industry’s undisputed masters of manufacturing productivity. During the early years, until 1923, it wasn’t unusual for The Ford Motor Company to repeatedly increase the productivity of its workers by 2x to 8x – every few years! But then, over the 50 years from 1923 to 1973 the company only managed to double this productivity.[4] U.S. management perceived that the big gains were long over. But Japanese automakers, relatively new to the business in the 60s, focused on managing perceptions to improve productivity. Initially this represented a radically different approach that directly contradicted American methods. Toyota management identified and standardized the definition of seven overlooked categories of manufacturing process wastes. These seven “new” wastes hide in plain sight. The majority of these wastes are “intangible,” such as excess motion, effort and delay. This presented a perceptual challenge. Despite years of incontrovertible evidence, Detroit’s conventional, Big Three auto manufacturers struggled to perceive and acknowledge the significance of these unconventional new wastes. Their executives resisted adopting new perception management techniques to overhaul their standardization methods and incorporate the new “lean principles” necessary to rid their operations of this costly, intangible waste. Over the 1970-1985 period, the Japanese became 2.5 times more productive than their U.S. competitors.[5] Their competitive survival at stake, American manufacturers were forced to capitulate and adopt lean manufacturing. They modernized their perception management, adopting the Japanese definitions of waste. The Japanese had demonstrated the value of considering perception management with equal weight along with activity management in manufacturing standardization processes. The irony? U.S. automakers had proudly – and inadvertently – invented perception management disciplines, from scratch, as an integral part of their DAM standardization efforts at the dawn of the moving assembly line, beginning in 1909. They failed to see how it could be separated from manufacturing and applied broadly to any standardization innovation process. But then, so has almost everyone else.

Recognizing Value in Contradiction: Trying to directly perceive paradoxical gaps in perception capabilities can initially seem as elliptically elusive as standardizing standardization. Not surprisingly, everything appears “normal.” Contradictions can highlight these gaps. However, “confirmation bias,” and other quirks of human cognition, make contradictions initially difficult to consider and even harder to ultimately accept. Confirmation bias is the tendency to search for, interpret, favor, and recall information in a way that confirms one’s preexisting beliefs or hypotheses.[6] And even when these contradictions are conclusively proven to devalue the business, “belief bias” makes it more comfortable to reject conclusions that contradict existing beliefs. Belief bias is the tendency to judge the strength of arguments based on the plausibility of their conclusion rather than how strongly they support that conclusion.[7] It’s easy to imagine how implausible the Japanese lean perceptions must have appeared to the U.S. automakers. Concluding that the Japanese were correct meant redesigning all of their existing plants. Other typical, smaller-scale examples of insightful contradictions in perception management can be found throughout most businesses:

Operating Instruction Imbalance: Products as basic as toothpaste include user operating instructions for consumers. And toothpaste plant workers receive written instruction and video training. Their perceptions will be managed with lean principles, initially derived from Japanese automakers. But not in the offices of the same company where knowledge workers rule. Here, often only undocumented “tribal knowledge” instructions exist for critically-strategic, business perception management tasks: defining “customers,” agreeing on their service priorities and calculating related profitability.

Inconsistent Perception/Response Processes: In a manufacturing organization, a screw that causes a product warranty return might be upgraded, re-specified and replaced before the next assembly line run. However, at the same company, customer complaints from the failure of the marketing department to coordinate promotions and pricing with the rest of the business might persist, unperceived and unresolved for months, even though consumer protection regulators have put the company on notice.

Similar Problem, Similar, Non-Obvious Solution: The elliptical nature of human perception improvement poses a subtle, cognitively awkward challenge for knowledge work throughout business. It’s similar to the challenge presented to the U.S. automakers by the Japanese perception, redefinition and management of waste in lean manufacturing: it feels more natural to dismiss the inarguable evidence of success than to accept the costly, embarrassing conclusions.

- But the knowledge work standardization problem has been solved many times over the past two and a half centuries. Today, there is plenty of inarguable, historical evidence of DPM success for knowledge workers to similarly and “comfortably” dismiss, at their peril. Examples:

- Gaspard de Prony, who, near the end of the 18th century, employed tiers of increasingly-skilled mathematicians to crunch vast quantities of numbers. He centralized and specialized perception of mathematical problem solving to divide and specialize the calculation activities of workers.[8]

- Charles Babbage envisioned the first mechanical computer in the 1830s. He and Ada Lovelace pioneered the thinking that would lead others, like Alan Turing in the early 1900s to perceive the division of knowledge work necessary to enable today’s computers and their operating programs.[9]

- H. Leffingwell documented the successful standardization of a global scale advertising operation in the 1920s. Through application of similar “lean” principles later made famous by Japanese automakers, the advertiser achieved more than a five-fold increase in productivity.[10]

[1] Wikipedia, Productivity Paradox.

[2] Russel, Andrew, and Vinsel, Lee, “The Joy of Standards,” The New York Times, February 16, 2019.

[3] Wikipedia, SAE International.

[4] Heitman, William, The Knowledge Work Factory, New York: McGraw-Hill, 2019, pp. 277-278.

[5] Cusumano, Michael A., “Manufacturing Innovation: Lessons from the Japanese Auto Industry,” Sloan Management Review, October 15, 1988.

[6] Plous, Scott, The Psychology of Judgment and Decision Making, 1993, p. 233

[7] Sternberg, Robert J., and Leighton, Jacqueline P., The Nature of Reasoning, Cambridge University Press, 2004, p. 300.

[8] Heitman, William, The Knowledge Work Factory, New York: McGraw-Hill, 2019, p. 59.

History’s lifecycle of standardization

Once innovators create successful standards, these can ultimately evolve through four major historical life cycles:

- Standards are often created, or innovated, on a limited basis, to serve an in-house need, or a single business’ strategic objective (Sources, Category 4, “Innovations”).

- Later, if they succeed, these can evolve to become de facto industry standards (Category 3, “Preferences”).

- Over time, these can be further institutionalized by voluntary industry organizations (Category 2, “Voluntary Standards”).

- Ultimately, these standards might be mandated into contractual agreements and regulatory standards (Category 1, “Laws”).

Building codes represent a good example of this type of historical, lifecycle evolution. Other, less obvious examples include many elements taken for granted today in business: accounting rules, safety protocols, and even the marketplace definitions of “assets.” For example, today, mouse-clicks and social media followers are widely considered in the marketplace as valuable assets that can be “monetized.”

Types of standardization with examples

Rules of thumb vs. “scientific” standardization

Are valuable standards in business born? Or, are they made?

Do businesses have to wait around hoping that the innovative lightning of valuable standardization will strike within their company? Or quickly copy the breakthrough standardization strategies implemented competitors? That’s the conventional perception. If standardization is feasible and valuable, someone will do, or would have done, it. But is there an alternative way to innovate and implement a process-standardization strategy? Can a standardization strategy circumvent the unpredictability of random, one-off standardization “breakthroughs”? If possible, this would routinely and reliably extend the conventional footprint of standardization and reap the resulting, time-tested benefits. It would accelerate automation, enabling more reliable digital transformation. Think of this alternative strategy as a sort of “standardization farming.” But this strategy requires a vast, unplowed field of inefficient work methods to continually replace with rigorous, scientifically developed standards. No problem. Rules of thumb provide these inefficient methods (Examples of de facto standards, above).

In fact, Frederick Winslow Taylor (1856-1915) spent his career developing and promoting precisely that predictable process-standardization strategy — minus the emphasis on automation. He published his seminal work in 1911, entitled The Principles of Scientific Management. Although this masterpiece has historically been misinterpreted, under-utilized, and overlooked, Taylor’s insistence on scientifically developed, quantitative standards for work activities is directly applicable for businesses struggling to identify digital transformation opportunities – even in modern day knowledge work.

Taylor noticed the vast drain on business efficiency that resulted from management’s widespread reliance on common rules of thumb when rigorous, scientifically accurate standards could be routinely developed as predictably valuable replacements. And so, by his own 1911 description, Taylor set out to create a management process-standardization approach based on “…the great gain, both to employers and employees, which results from the substitution of scientific, for rule-of-thumb methods in even the smallest details of the work… (to achieve)…enormous saving of time and…increase in the output…[1]”

More importantly for digital transformation today, Taylor noticed that the intangible waste of avoidable work effort was “difficult to perceive” because it left nothing behind: no scrap piles, no returned goods. Appreciating these vast, stealthy losses required, according to Taylor, “an act of memory, an effort of the imagination [2].” That means it’s likely to go unnoticed, hiding in plain sight.

“Rules of thumb” is an effective way to understand the “unstandardized” problem. While businesses will push back against that portrayal – as they have since Taylor’s time in the late 19th century – the fact is that existing work is not un-standardized. Rather, it is under-standardized. That’s because it operates according to rules-of-thumb, de facto standards. Rules of thumb resemble a one-employee effort to standardize what is within their control. The result is a least-effort, small-scale solution to standardizing—a well-intentioned shortcut. Rules of thumb represent a direct contradiction to the organization-wide, maximum-effort strategy to achieve a massive-scale productivity gain from the development of quantified, scientific standards.

[1] Taylor, Frederick Winslow, The Principles of Scientific Management, London: Akasha Publishing, 2008 (reprint), 1911 original, p.19.

[2] Ibid, p.5.

Deliberate business standardization

Businesses consciously adhere to a wide range of diverse, deliberately-developed standards—some voluntary, others mandated by law. At the same time, they often seek to deliberately establish standards as a part of their competitive strategy. If successful, the business might establish its product or service as a wider standard for the industry, the region, or an entire category. Consider the examples below:

Generic standardization includes a product or service that defines an entire, often new, class of similar things:

- Kleenex describes an entire class of facial tissues.

- Band-Aid, invented in 1920, describes an entire class of adhesive bandages.

Brand standardization includes the visual, audio, or linguistic characteristics which define a company or category of products:

- The Shell Oil Company logo requires no text, characters, or symbols for recognition, worldwide.

Some brand standards are so powerful that the owners temporarily modify them, disrupting standard customer expectations to increase brand recognition and awareness:

- Coca Cola temporarily relabeled its cans with people’s first names using its famous logo script.

- Snickers candy bars were similarly rebranded with words such as “Hungry” and “Satisfies.”

Industry standardization are generally-accepted practices and/or technical specifications adopted across an entire industry:

- The financial services industry maintains standards for the transfer of funds by means of its Automated Clearing House (ACH) network.

- The tire industry maintains technical standards (such as P-metric and Euro-metric) for the sizes of its products.

Interested in a deep dive into banking standardization? Be sure to check out this case study here: How a community bank CEO standardized processes to gain 21% capacity

Market standardization are similar to industry standards (above), except that they pertain to market segments, which might cut across industries:

- Manufacturers of products such as electrical equipment often produce different versions of the same device to meet the differing needs of residential versus commercial markets.

- Professional service standards and licensing requirements often vary by market: General practice physicians must meet different standards than those promulgated for specialized surgeons.

Other business standardization case studies of real-life projects can be found below:

- Luxury Products Retailer, Finance Group & Procurement: Strategic Standardization Simplifies Reporting & Improves Operating Cost by 20%

- Worldwide Retailer, Shared Services: Design and implementation of a standardization “roadmap” for global operations

- Consumer Packaged Goods: Standardization Unlocks Unrealized Benefits of ERP Technology Upgrade

- Electronics Distribution & IT Services: Standardization Improves On-time Delivery 30%

- Auto Parts, Tire Manufacturing: Standardization and Intelligent Automation Increase Production, Slash Downtime

The importance of standardization in business

The feasibility of standardizing knowledge work involves profoundly significant implications for strategy across virtually all aspects of the enterprise. It opens up a wealth of options and opportunities that offer breakthrough performance and competitive gains. Although too numerous to record in their entirety, the following list of implications will stimulate thinking and begin the challenging task of changing management perceptions.

- Management approach. Knowledge work is currently perceived as “impossible to standardize.” If true, that requires a management approach based on broadly defined initiatives that leave the details up to the workers and management. They will improvise, using rules of thumb. And the optimal management approach is incentive-oriented. Think of workrooms that pay on a piecework basis. No, you likely won’t find knowledge workers paid by the piece. However, they are commonly rewarded for their “best efforts” at achieving progress toward the objectives of broadly-defined initiatives. And yet, two thirds of their knowledge work can be standardized and managed at the activity level. This offers the advantage of reduced waste, rework, and excess variance for identical activities. Additionally, a large share of these rote activities can be automated.

- Automation strategy. Standardization rapidly enables a wide range of new, currently under-utilized, low cost, near term automation options that deliver substantive gains with minimal risk. For example, standardizing the alignment of existing, under-utilized data sources with activity-based operations enables the application of new, “no code” Business Intelligence (BI) technologies. These rapidly deliver insights that help increase productivity and manage profitability at unprecedented levels of precision. And robotics process automation (RPA) can perform 20 percent or more of knowledge work activities after these are standardized. Furthermore, these automation technologies can be implemented incrementally. Go as fast or slow as you choose, results are realized within weeks and disruption to operations is minimal. No more “big bang,” high stakes system conversions – often no systems involvement whatsoever.

- Performance goals. Standardization defines new horizons of feasibility for competitively decisive gains in productivity, flexibility, quality and speed. Recall that on the factory floor, standardization and automation combined to deliver productivity gains of 2x to 8x every few years in the early days of The Ford Motor Company.[1] For unstandardized knowledge work organizations, it is feasible to expect similar gains. Executives should target doubling productivity within a year or less.

- Unprecedented consistency. Human knowledge workers, using rules of thumb, struggle to achieve consistency. Errors permeate these operations. Late data entries, keystroke errors and faulty spreadsheet logic can erode customer experience and increase levels of compliance risk. Standardization alone will address many of these issues. Coupled with RPA and monitored with BI performance reporting, knowledge work operations can implement statistical process controls that previously were only feasible in advanced manufacturing operations.

[1] Heitman, William, The Knowledge Work Factory, New York: McGraw-Hill, 2019, pp. 277-278.

Advantages and benefits of standardization

Historically, the most valuable benefits of standardization in business deliver breakthrough competitive advantage for workers, businesses, and entire industries. Over the course of two and a half centuries, landmark breakthroughs in business standardization have been famously documented for manufacturing straight pins (Adam Smith, 1776), laying bricks (Frank Gilbreth, 1909), and assembling automobiles (Henry Ford, 1913).

Historically, the most valuable benefits of standardization in business deliver breakthrough competitive advantage for workers, businesses, and entire industries. Over the course of two and a half centuries, landmark breakthroughs in business standardization have been famously documented for manufacturing straight pins (Adam Smith, 1776), laying bricks (Frank Gilbreth, 1909), and assembling automobiles (Henry Ford, 1913).

Each of these standardization breakthroughs replaced informal, inefficient work methods with more rigorously—even “scientifically”—developed standards. Over the course of time, the benefits of standardization in business from these insights have been continuously harvested in the form of increased levels of automation; even brick laying is increasingly performed by robots. These standardization breakthroughs have not been overlooked; they continue to be transformational.

The single standardization process most relevant to business is that driven by innovation, as described in the History of standardization section above. In this instance, businesses or entrepreneurs develop what they believe will be a “better mousetrap”—one that impels customers to “beat a path to their door”, e.g., a faster algorithm, a tastier formula, or a more efficient supply chain.

Advantages of activity-level standardization

Henry Ford noted in his autobiography that “the greater the subdivision of industry, the more likely that there would be work for everyone.”[1] This actually speaks to the activity-level standardization in manufacturing, and the numerous benefits it confers upon the company:

- Training is simplified and cycles are reduced: workers can learn new jobs, and more jobs, and get started almost immediately. Similarly, the operation can better accommodate a diversity of worker skill levels.

- Short tasks (anywhere from one to eight minutes in duration) enhance simplicity and make it easier to fit them to individual workers’ skills.

- Overall standardization of activities allows for greater scheduling flexibility; management can easily move workers, depending upon needs.[2]

In modern production operations, these brief, consistently-timed activities deliver numerous benefits, including:

- Line balancing, with easier-to-achieve continuous-flow production.

- Outsourcing, due to the unbundling of work activities which increase opportunities.

- Automation: Simple, short, and repetitive tasks are ideally suited to machines and robots.

Benefits of standardization: quantifying the potential impact

There are two simple ways to quantify the potential impact of standardization in business:

- The prospective knowledge worker labor reductions related to decreases in “virtuous waste” activity – the well-intentioned corrections, rework and excess variance that result from rules of thumb-based operating standards.

- The current cost to shareholders, i.e., the erosion of earnings and market value attributable to virtuous waste activities.

Knowledge worker labor reductions

Three sources of reductions are available to businesses adopting standardization and the related technologies that capitalize on it: standardization only (without technology); business intelligence (BI); and robotics process automation (RPA); Typical, highly conservative, estimates are outlined below for each source:

- Standardization only (without technology): At least 15 percent of knowledge worker labor can be reduced by documenting business processes, reducing the root causes of errors and rework, and ensuring that internal best practices are transferred across the workforce.

- Business intelligence (BI) technology: After standardization of data sources, BI can be deployed to help reduce excess variation in the efforts of individual knowledge workers. When individual employee is unmeasured, variance for identical work is typically excessive. The variance between the top and bottom quartiles often falls in the 2x to 7x range for simple operations such as transaction processing. More complex processes such as sales or risk underwriting can vary much more. At least 15 percent more in labor savings can be captured by deploying basic BI performance dashboards and key performance indicators (KPIs).

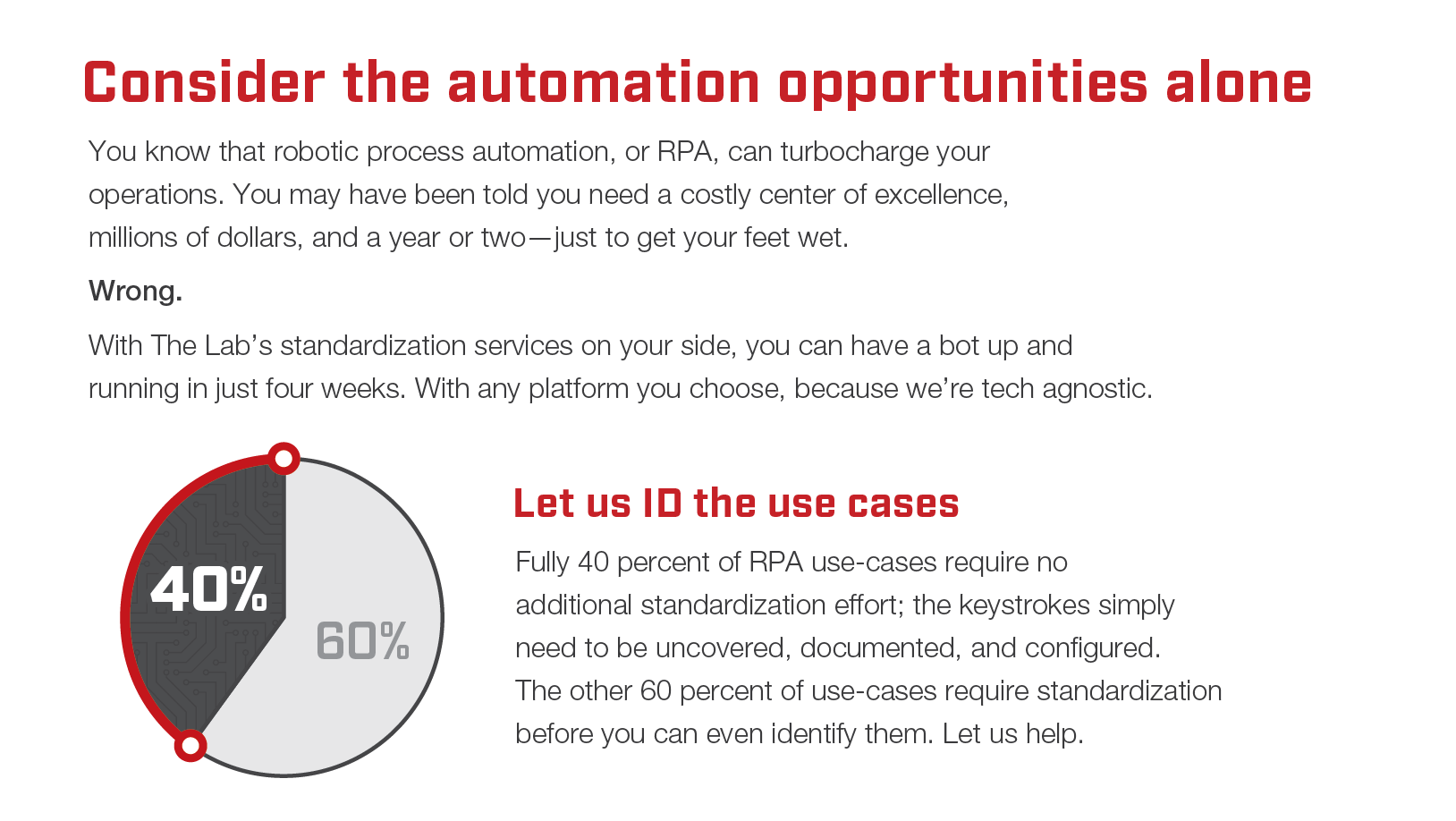

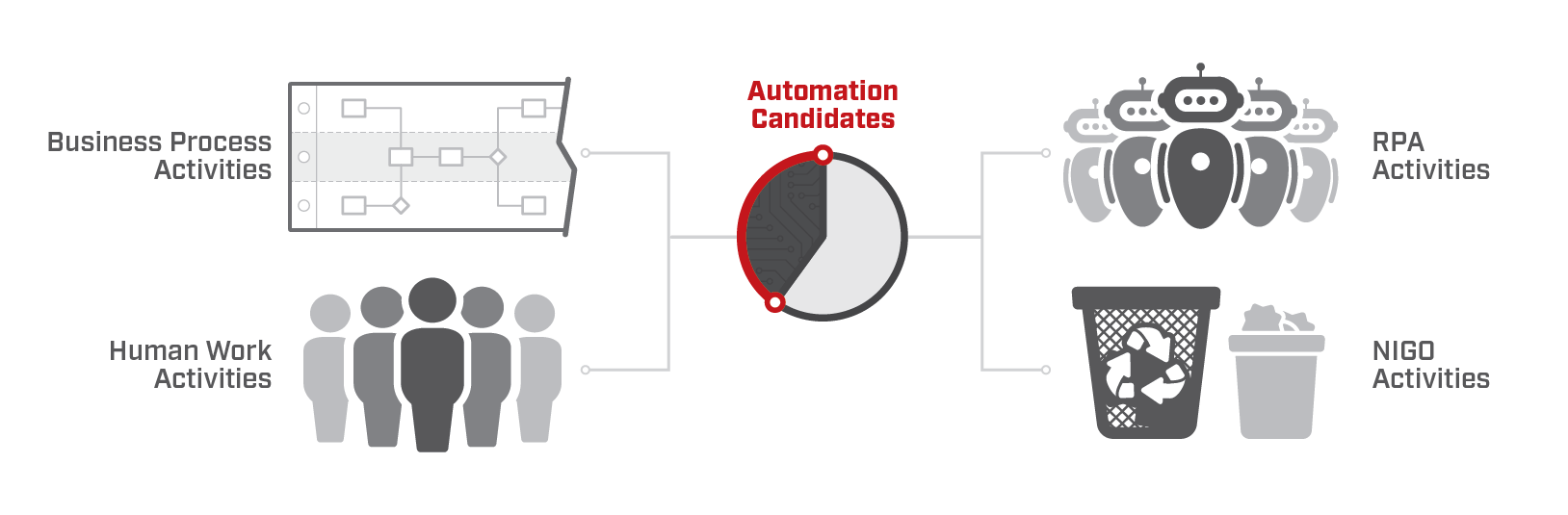

- Robotics process automation (RPA) technology: An additional 20 percent labor saving is available from deployment of basic RPA. This saving requires the standardization outlined in a, above, as a prerequisite. The documentation helps identify RPA candidates and the standardization of work activities creates more candidates, also known as “use cases.”

Of course, there is nothing that prevents pursuit of all three labor savings opportunities. The benefits are additive, offering the prospect of a 50 percent labor savings, or a doubling of productivity.

Lack of standardization cost to shareholders

Based on only one improvement cited above – the standardization of knowledge work (without technology) – the cost of “virtuous waste” squanders some $3 trillion in value among the Fortune 500 alone:[3]

- Ten million knowledge workers are employed by the Fortune 500.

- Thirty percent of their work activities consist of avoidable waste (also known as “virtuous waste”).

- Three million full-time equivalent workers perform avoidable waste activities.

- $180 billion is spent on compensation to these workers.

- Twenty percent of earnings are diverted to virtuous-waste compensation.

- Thus, a minimum of $3 trillion in value to shareholders is lost to virtuous waste.

Of course, this figure for shareholder virtuous waste costs could double ($ 6 trillion) or triple ($ 9 trillion) if the benefits of BI and RPA technologies are applied to the Fortune 500 knowledge worker population. And this is where belief bias is likely to enter the picture, acting as the skunk at the picnic. Compared to the total market value of the Fortune 500 ($ 21 trillion), these cost figures become so large as to strain conventional perception and reduce the plausibility of the estimates. It doesn’t matter that there are numerous historical precedents in manufacturing, it is easier to dismiss the possibility that these figures may be feasible. After all, conventional logic says that if someone could have done it, then someone would have done it. Markets are efficient.

Thankfully, over the past century, businesses from Ford through Apple have created value by ignoring this conventional logic.

[1] Heitman, William, The Knowledge Work Factory, New York: McGraw-Hill, 2019, p. 236

[2] Heitman, William, The Knowledge Work Factory, New York: McGraw-Hill, 2019, p. 236.

[3] Heitman, William, The Knowledge Work Factory, New York: McGraw-Hill, 2019, p. 3.

Process and data standardization: How to do it

Business standardization challenges span customer experience, data format/quality, employee productivity variance, and technology implementations alike. Under-standardized data blocks the path to automation and makes the realization of value from data one of the biggest challenges business executives face today.

Knowledge-work employees typically spend a third of their day on unnecessary rework, squandering time and resources, due to poor data quality, missing information, and under-standardized business processes. This is all the more apparent when you realize that 70 percent of knowledge-work activities are similar, repetitive, and ripe for standardization.

When you improve standardization, you improve your entire business.

Standardizing process and data for technology implementation

Under-standardized systems—with under-utilized capabilities and features—are another opportunity that hides in plain sight. This standardization is the prerequisite for all modern technology implementations, although it’s often overlooked and ignored, because end-buyers are promised miracle solutions by technology companies which claim to provide “instant standardization,” which never materializes.

This is the case whether it’s an ERP for a supply-chain company, a core banking system, or an agency-management or claims-processing system for an insurance company.

- Under-standardized System Implementation Example 1 – Banking:

- Bank A and Bank B have the exact same core system installed, but the workflows are configured completely differently, even though they have branches directly across the street from one another:

- Bank A could process deposits with a total of 20 clicks through the workflow, whereas Bank B takes 37 clicks—while doing a less-thorough job of papering the audit trail for that process.

- Bank A and Bank B have the exact same core system installed, but the workflows are configured completely differently, even though they have branches directly across the street from one another:

- Under-standardized System Implementation Example 2 – Fortune 100:

- A global conglomerate has seven different ERPs: one for each region in which it operates. Each one is configured completely differently, even though they’re from the same ERP provider.

- Region 1 has free-form fields where field engineers enter service requests. Region 2 has standardized drop-downs that they can select from, for different service types. Region 2’s standardized configuration enables quicker automation, whereas Region 1’s free-form approach requires ground-up standardization as a prerequisite.

- A global conglomerate has seven different ERPs: one for each region in which it operates. Each one is configured completely differently, even though they’re from the same ERP provider.

And what about ancillary systems, such as CRM, A/P, or HRIS? These are hardly ever integrated into the core-system workflows. This results in the “human glue” that companies employ to cobble together systems at the user-interface level.

Standardizing business processes: Time-tested, often overlooked, but more important than ever

Standardizing knowledge-work business processes is possible—although it is challenging for even the most experienced lifelong lean Six Sigma expert. That’s because the processes themselves aren’t always tangible or visible as they are in, say, manufacturing. Knowledge-work “assembly lines” exist behind computer screens, emails being exchanged, and pieces of paper handed back and forth between cubicles.

Many businesses in the United States reduced their investments in internal improvement/lean Six Sigma capabilities through the 20-teens, as more and more new technologies became available. The thinking was that the new technology would solve all of their business needs—although quite the opposite has by now become apparent: Businesses now have more systems than they’ve ever had before—and they have more headcount than they’ve ever had before.

When seeking to standardize business processes, the following knowledge-work elements must all be considered:

- Rules and logic

- Customer segments

- Scheduling: People, production

- Know-how/best practices

- Customer experiences and touchpoints

- Workflow rules

- Individual job roles, responsibilities, and tasks

Standardizing all of the above will dramatically reduce informal process “rules of thumb” and the reliance on embedded (and often hoarded) tribal knowledge that walks out of your organization when people leave. Plus, this yields significant opportunities to increase capacity and efficiency, even without changing any underlying existing technology configuration. And finally, it prepares your organization for the deepest-level “nano-scale” automation possible.

Standardizing data to enhance and automate reporting

Standardizing data is equally important. Poor data quality (such as improper categorization, and missing fields and meta data) not only slows daily operations. It also blocks the adoption of technologies such as AI, machine learning or ML, automation, and advanced analytics.

Another essential target for standardization is reporting. Identifying the vital few “Super KPIs,” performance targets, and improvement plans will help to pare down the current glut of reporting—and drive proactive management strategy.

Process mapping to accelerate standardization

Using templates and IP-assets derived from past engagements will speed the documentation of organizations, processes, and data—enabling the direct comparison of KPIs, best practices, and automation opportunities.

Standardization and process mapping go hand-in-hand. The template-based approach accelerates the mapping of work activities, job positions, data, technology, and customer touch-points, as well. Process mapping makes it easy to compare existing processes to industry best practices—which again link back to standardization and automation.

A template-based approach can be used to build end-to-end process maps in as little as six weeks.

The Lab can standardize your business processes, fast.

Since 1993, The Lab’s expertise in improving efficiency in white-collar work has contributed to global businesses reclaiming capacity, improving margins, and achieving sales growth.

The Lab specializes in process and data standardization for knowledge work centric businesses and process like those in banks, credit unions, insurance companies, and even professional services companies and supply chain businesses. By delivering our Knowledge Work Standardization® methodology, we accelerate strategic benefits for C-Suite clients. We incorporate process improvement, intelligent automation (RPA), artificial intelligence (AI), and data analytics, to optimize organizations.

You can read more on our business standardization services here: The Lab’s business standardization service offering.

With The Lab as your Process Improvement Partner You can Rapidly Standardize:

Knowledge Work

- Office work, activities, tasks

- At least 60% are similar, repetitive

- Ideal for digitization—if standardized

“Nano-Scale” Activities, Tasks

- Activities, tasks: Two-minute (or less) duration

- Copy, paste, transfer: Keystrokes, mouse-clicks

- One-third of typical office tasks are corrections

- “One-off” activities and tasks that resist automation

- The Lab standardizes and automates activities, reduces variance and drudgery

End-to-End Business Processes, Workflow

- Processes are sets of sequenced activities

- Work often arrives not-in-good-order (NIGO)

- 30% of activities are NIGO corrections (rework)

- Most NIGO is avoidable—with standardization

- Standardize for robotic process automation (RPA)

Organization Data, Design

- Data-capture methods are analyzed and upgraded

- Front-end data-capture standardization reduces NIGO

- Implement deep meta-tagging for KPI measurement and predictive analysis

The best way to appreciate the power of knowledge work standardization is to see it for yourself.

We invite you to book your 30-minute screen-share demo. Get all your questions answered by our friendly experts. And see how The Lab delivers all this from our U.S. offices in Houston, with nothing outsourced or offshored—in just weeks.

Simply call (201) 526-1200 or email info@thelabconsulting.com to book your demo today!